Two weeks ago, New Scientist wrote an excellent article alluding to many of the social science themes we cover. We’ll start with two thought-experiments noted in the article that illustrate human selfishness or irrationality:

1. Imagine an outbreak of disease threatening a small town of 600 people. Given budget constraints, we can develop treatment A, which is guaranteed to save 200 people, or treatment B, which has a one-in-three chance of saving everyone and a two-in-three chance of saving no one. Which would you pick?

1. Imagine an outbreak of disease threatening a small town of 600 people. Given budget constraints, we can develop treatment A, which is guaranteed to save 200 people, or treatment B, which has a one-in-three chance of saving everyone and a two-in-three chance of saving no one. Which would you pick?

2. Imagine a different outbreak in a different town, with another choice between two treatments: treatment C will certainly kill a mere 400 people, but treatment D will either save everyone with a one-in three chance or no one with a two-in-three chance. Again, which would you choose?

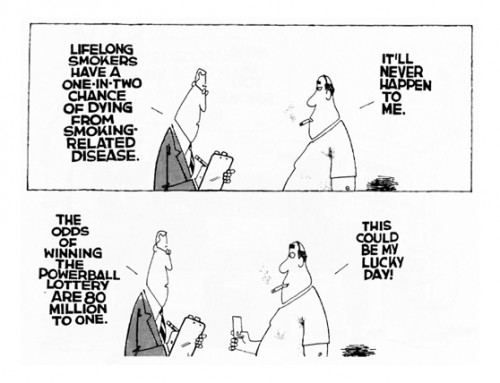

As researchers Daniel Kahneman an Amos Tversky discovered, the most people preferred treatments A and D – even though A and C are identical, just as B and D are identical. This was originally construed as a “loss aversion” – the idea that people rue losses more than they celebrate gains – but scientists have gone further than that. As the recent article posits, “the argumentative theory offers a new twist, suggesting that participants in these experiments chose the response that will be easiest to justify.” In the above example, someone’s self-justifying desire to be the definite savior of 200 people kicks in in example 1, but someone’s aversion to ensuring the death of 400 precludes this choice in example 2. Given these inconsistent preferences, the extent to which moral language irrationally appeals to our desire for justification is clear.

This “news” is not particularly novel with regard to humanity’s propensity toward self-justification – we have been about that business for a while. Indeed, another behavioral fallacy cited is “confirmation bias,” the idea that our reasoning is “curved in on itself” in such a way that we register facts confirming our beliefs far more than facts refuting them. The article’s new contribution, however is a survey of a new theory to explain this; namely, that mankind developed in such an argumentative way because persuasion was so crucial to evolutionary success. Leaving Christian debates about evolution aside, we can appreciate evolutionary science’s acknowledgement of self-justification’s importance – as well the fact that the need for such justification, in the moralistic terms cited above, casts this idea in a decidedly religious (dare I say Christian?) fashion.

The paper first articulating this argumentative theory – in short, that mankind evolved to be distinctively self-justifying – is so important than mbird-favorite Jonathan Haidt suggested that its abstract “‘should be posted above the photocopy machine in every psychology department.'” Despite this importance in the field of psychology, however, it does sometimes seem like Martin Luther, Paul of Tarsus, or even astute common-sense people-watchers could have deduced insights similar to these. For this reason, it seems incredible that it’s taken a few centuries for science to begin challenging the humanist or Enlightenment idea of the rational human being.

The paper first articulating this argumentative theory – in short, that mankind evolved to be distinctively self-justifying – is so important than mbird-favorite Jonathan Haidt suggested that its abstract “‘should be posted above the photocopy machine in every psychology department.'” Despite this importance in the field of psychology, however, it does sometimes seem like Martin Luther, Paul of Tarsus, or even astute common-sense people-watchers could have deduced insights similar to these. For this reason, it seems incredible that it’s taken a few centuries for science to begin challenging the humanist or Enlightenment idea of the rational human being.

The very issue of rational vs. limited anthropology, however, illustrates confirmation bias on a deep level of human nature. In this sense, confirmation bias’s tilt towards the ego is both the mechanism and the content: academia is often behind the curve because of our desire to affirm the previously held consensus, but we could expect this bias to be particularly strong on anthropology because the very consensus being challenged is that of a rational, fundamentally objective or scientific man.

The upshot of all of this is a reaffirmation of humility. Taking the argumentative theory as a premise, New Scientist‘s article explores disputation’s role in group intelligence. The finds of recent psychology are startling:

A group’s [intellectual] performance bears little relation to the average or maximum intelligence of the individuals in that group. Instead, collective intelligence is determined by the way the group argues – those who scored best on [their] tests allowed each person to play a part in conversations. The best groups also tended to include members who were more sensitive to the moods and feelings of other people…

Individual knowledge and analytical reasoning …can encourage us to justify our biases and bolster our prejudices. ‘We believe that our intelligence makes us wise when it actually makes us more susceptible to foolishness,’ says [a researcher]. Puncture this belief [in individual wisdom], and we may be able to cash in on our argumentative nature while escaping its pitfalls.

[youtube=http://www.youtube.com/watch?feature=player_detailpage&v=3MNFJBbiRNE&w=600]

COMMENTS

Leave a Reply